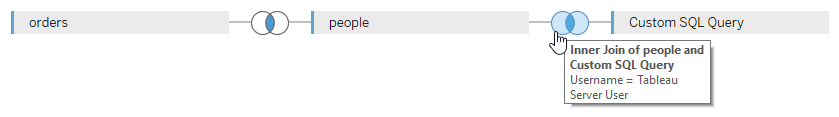

Imagine you have Tableau dashboards that require the use of row-level security on data coming from PostgreSQL, but the connection to that database is established through the same technical user for everyone. How can you achieve that? Is it even possible? Short answer: it’s possible – and it’s not even that complicated, provided you’re not afraid of some PL/pgSQL scripting, PostgreSQL session IDs, and clicking on that mysterious “Initial SQL” button in Tableau Desktop. For full disclosure I’d like to mention that I found the original idea for this approach on my colleague Bryant Howell’s excellent blog, where he outlines the process for Microsoft SQL Server. That said, the approach I’m showing here is not a mere translation from SQL Server to PostgreSQL, but I also added a few more features.

Continue reading →